In just under a decade, cognitive computing will become part of our daily lives, and will change large and important fields, such as healthcare, education, business, banking, retail, HR, and many more.

In the right hands, humanity will benefit from this new technological innovation. Read more to find out how!

Humanity faces critical challenges: to surmount them, we have to focus on human quality of life and environmental impact.

Cognitive computing is a field I’ve been passionate about for quite some time now. My research tell me that cognitive computing is the next big innovation wave poised to redefine markets. Some say that cognitive computing represents the third era of computing: in 1900, computers could tabulate sums, and in the 1950s, we got to programmable systems. Cognitive systems will arise next.

What is Cognitive Computing?

Simply put: a computer with a brain that thinks and behaves like a human being.

It’s not so hard to believe. Concepts like automation, machine learning, and artificial intelligence sounded strange before they were invented and applied to modern problems. Today, these technologies improve our lives at home and at work for the better, powering technologies like handwriting recognition, facial identification and behavioral pattern determination to any task requiring cognitive skills.

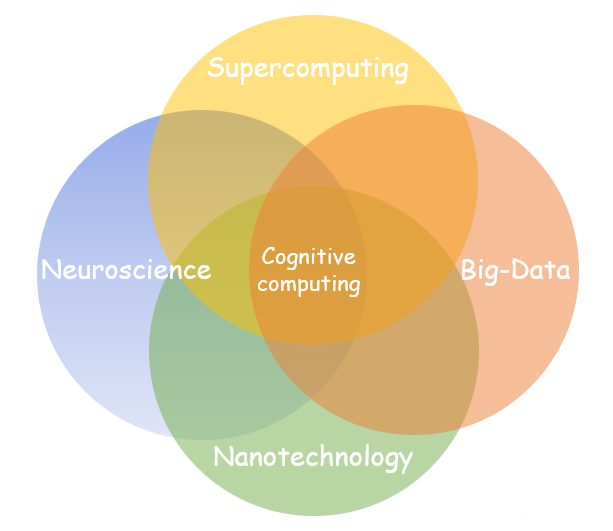

Cognitive computing comes from a mashup of cognitive sciences — the study of the human brain and how it functions — and computer science. It is at the intersection of Neuroscience, Supercomputing, Nanotechnology, and Big-Data.

How does Cognitive Computing work?

Cognitive computing allows computers to mimic the way the human brain works. Cognitive computing uses self-learning algorithms based on data mining and pattern recognition to generate solutions to a wide variety of problems. Yet to achieve these feats, as presented by the Cognitive Computing Consortium, cognitive computing systems must be adaptive, interactive, iterative, stateful, and contextual. Missing any of these attributes prevents a system from achieving cognitive computing.

Key Players Implementing Cognitive Technologies

IBM is the pioneer of this technology: the company has invested billion dollars in big data analytics, and now spends close to one-third of its R&D budget in developing cognitive computing technology.

Microsoft, Google, and Facebook have also shown longstanding interest in cognitive computing from the beginning. These companies invest heavily to develop better products with the help of this technology.

How Cognitive Computing Will Change Lives

In an era of cognitive computing, one of the fields that will benefit the most is healthcare.

Cognitive computing will help collate pathogenic and patient data,—including patient history, journal articles, best practices and diagnostic tools—analyze that vast quantity of information, and provide a recommendation based on real-time needs. This will expand the doctor’s ability to treat patients efficiently and effectively, processing volumes of data to streamline decision-making and providing a roadmap to better patient prognoses. In the end, patients will do better as their doctors will have a better guide to how to hasten treatment along a better course.

“Cognitive healthcare is real, is here and it can change almost everything about healthcare,” according to Ginni Rometty, IBM’s CEO.

Cognitive computing can be used for a number of different problems, including:

Watson for Oncology helps physicians quickly identify key disease information in a patient’s medical record, analyze relevant evidence for and against different treatments, and explore treatment options.

The world’s first cognitive cooking application created by IBM’s Watson offers an original recipe for every meal while considering dietary restrictions, personal preferences, and even the kinds of food you have in your refrigerator. An app like this could save a lot of time and energy for the people suffering from diabetes, for example.

Project Debater developed by IBM is the first AI system that can debate humans on complex topics. Its goal is to help people build persuasive arguments and make well-informed decisions based on facts.

Examples can go on as cognitive computing systems have the potential to replicate or improve human processes for any field where large quantities of complex data need to be processed and analyzed to solve problems, including finance, law, education etc.

Cognitive computing has widened concern about machines replacing humans in the workplace given its ability to automate processes and learn like humans. Yet cognitive technology can’t work without the support of human intelligence since we are the ones feeding intelligence into the system.

Before you start thinking about machine domination à la The Matrix or Terminator, a Skynet takeover scenario is as unlikely as those movies are fictional. Even if AI ends up replacing humans in work environments, we will always need human intelligence in decision-making processes.

In our ever-changing world, technological innovation constantly creates new solutions and new challenges. It’s our job to figure out how to use technology to adapt and prepare for them.

The secret to getting ahead is getting started. Stay tuned for more in depth information (coming soon!) on how to explore the Cognitive Computing field and get ready for the future.