When you started developing your first PHP project you never would have thought that some of the biggest challenges won’t come from your codebase. But your day looks a lot different than you expected.

You’re handling downtime once or twice a week, know more than you have ever wanted about hard drives and debugging in production every other day (well, I said debugging, but I didn’t mean xdebug, I meant var_export and debug_backtrace to a log file).

You’ve met a friend over tea the other day and a new question popped up in the middle of your discussion: how does your release pipeline work?

So, you started to explain the process: “I go there … create that … login there … run a command … verify that there … “, then you realized: you are the release pipeline! If you’re not in the office, it doesn’t happen!

This is 2019, there is a better way, and no, you don’t have to refactor 100 things to take advantage of new technologies or practices. But you must start somewhere, and in this case, I assume you are either using AWS for your PHP project or are open to start.

WHY? AWS allows you to get started on the right foundation, develop more efficiently and deploy faster to deliver more value to your customers.

Let me show you the best-case scenario and you can pick what features and services you want to use.

Solution

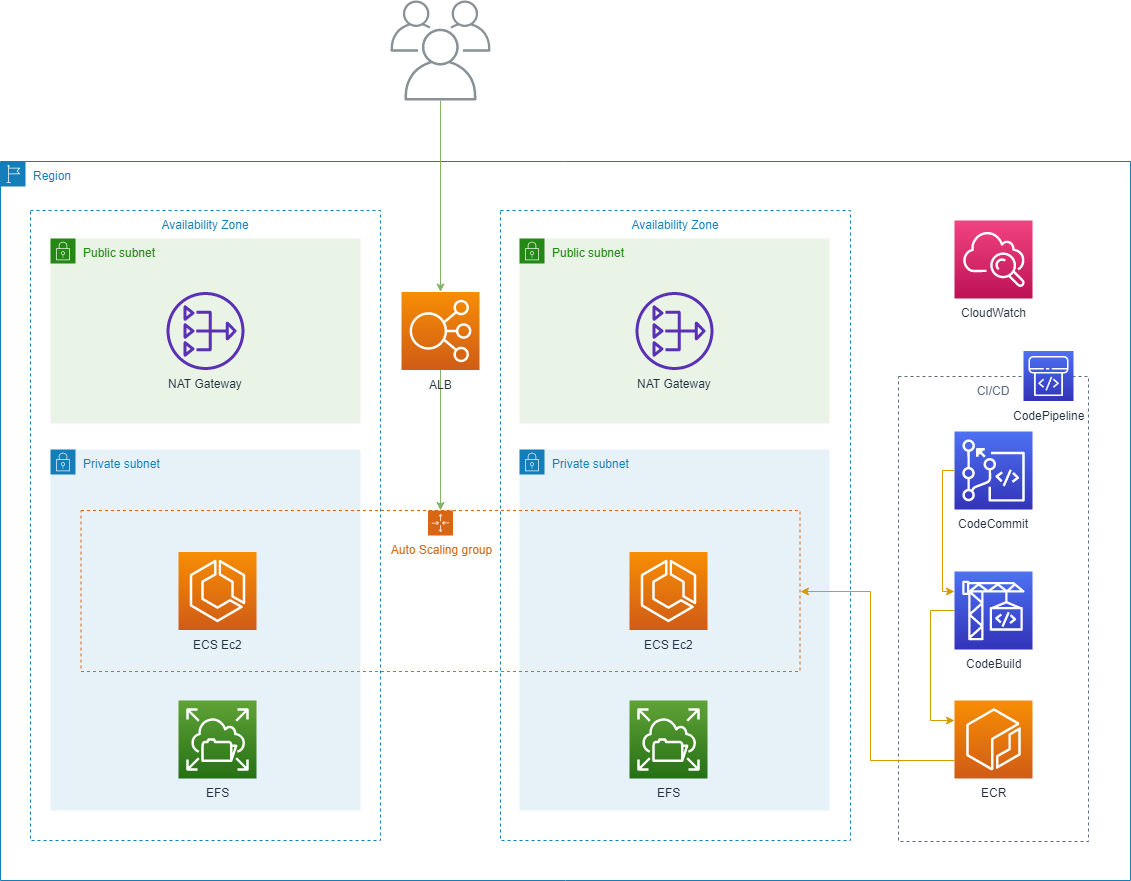

Let’s start with your app! We’ll assume you use containers and those containers need to mount a distributed shared storage.

Docker Cluster

First things first: we need to setup a docker cluster, so we have to run somewhere those containers of yours. AWS native service for containers is ECS (Elastic Container Service) and it needs one or more virtual machines (EC2) for it to work. This is fairly easy if you use “Infrastructure as Code” – for more details, watch the replay of this webinar:

The whole idea with “Infrastructure as Code” is that systems and devices which are used for running software are treated as if they were themselves software.

Distributed Storage

I mentioned distributed storage, so that means NFS of some sort. Let me present AWS managed service for NFS called EFS (Elastic File Storage). Its biggest upsides are:

- You don’t have to managed NFS servers

- You don’t have to provision a certain amount, it scales as you need with no interaction from you

- You pay around $0.08 per GB per month

I’ve had enough issues with distributed storage in the past, let’s say I didn’t always get a full night’s sleep because of GlusterFS in its beta days, so I really like EFS.

Again, using something like EFS is great when you don’t have the time or resources to change your app to use S3, which I highly recommend you to do if you can.

Load Balancer

After adding the cluster, we would need to spread the load, but if you have multiple services or microservices, a classical load balancer is not enough!

And this is because you need to route traffic to different microservices based on path or host.

Traditionally, for the host situation, people just added one load balancer per service (gets expensive fast for microservices), but for the path situation you had to have something like HAProxy, which, of course, is not the best option because now you have another thing to manage.

AWS offers ALB (Application Load Balance) which gives us advanced routing (host, path, and more) without having to manage anything. Check out this image from the documentation, that shows you how easy it is to route to your microservices.

Autoscaling

Now, that we have a cluster, storage, and a load balancer, we should add some autoscaling to handle traffic surges and reduce costs to your PHP project, which we can do so on two levels: the cluster and your services.

You might ask: why do we need two levels? Isn’t this a bit too complicated?

If you add another server to your docker cluster and keep the same number of containers (with CPU and memory limitations), how much would that help? Not much.

If you want to simplify things and only care about one level of autoscaling, you should use “Serverless Containers” (ECS Fargate) that doesn’t support EFS.

This means you must switch the storage to S3, and you can’t always change the application.

Interested in learning more about “Serverless Containers”?

Subscribe to the upcoming webinar.

Choosing the right scaling metrics is a complex topic, so I will just share what metrics you can use.

For scaling the cluster, you’ll want to check out the EC2 metrics. Be aware that to use the memory metrics you have to install the CloudWatch agent.

For your microservices, you have your Cluster and Service metrics, all available in the same place CloudWatch.

Actually, there is yet another option, since we are using an ALB, we can scale based on how many requests we have or how many errors we see. The full list of metrics available is in this documentation page.

Release Pipeline

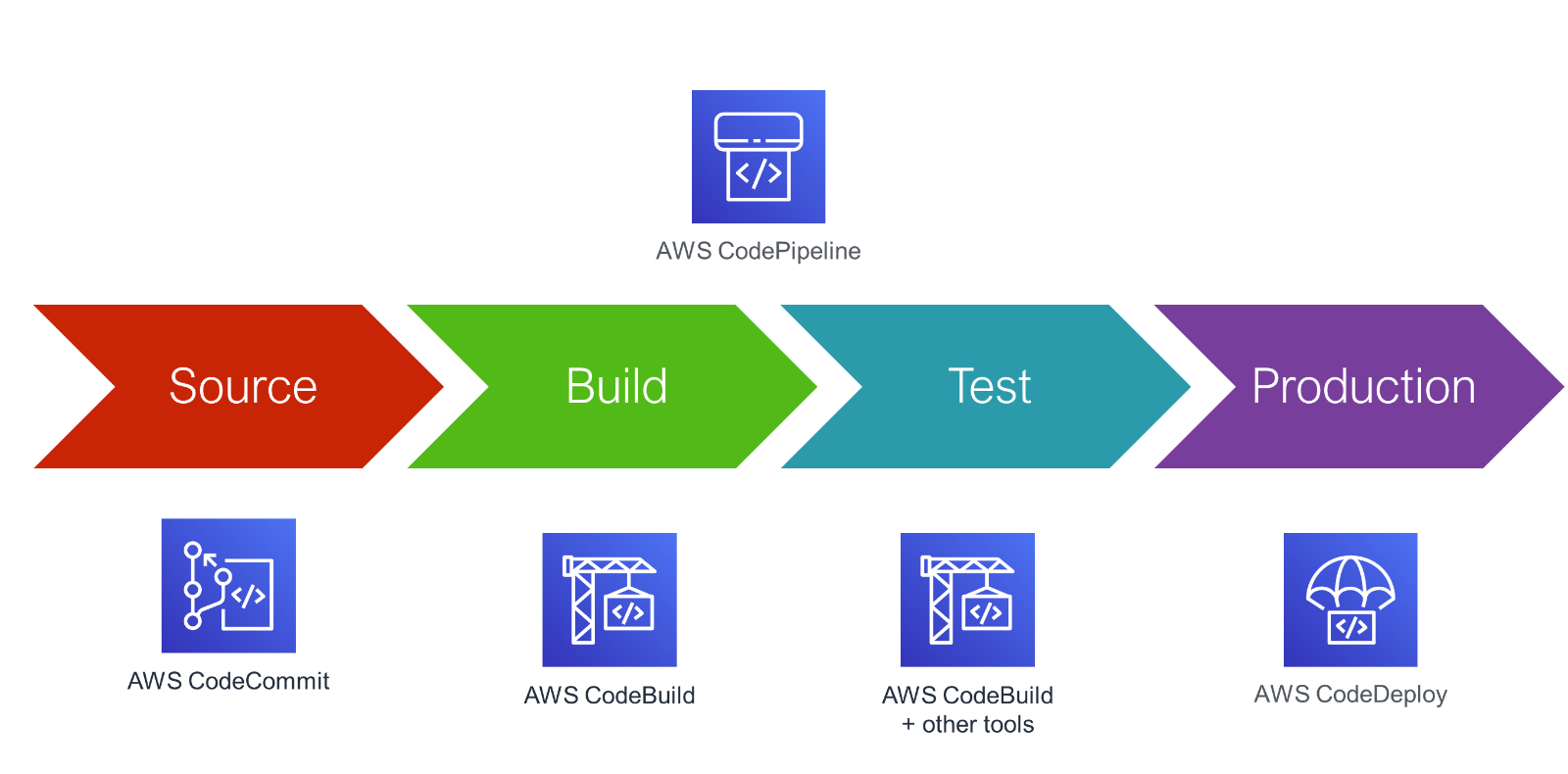

To implement a practical CI/CD pipeline, we start with CodeCommit, which is just a hosted git service. You push your code there (application code and Dockerfile) which starts CodeBuild.

Codebuild creates a serverless container of your choosing, which takes a list of commands of your choosing (composer install, minify static files, build the container), runs them, and then pushes the container to ECR (Elastic Container Registry) – ECR being your hosted and private docker registry.

CodePipeline detects that a new version of your image exists and automatically deploys it in your ECS cluster.

There you go, a fully automated pipeline for deploying your microservices. AWSome!

To conclude, by letting AWS do some of the heavy lifting for your PHP project, you have a load balanced, autoscaling docker cluster with a fully automated release pipeline for your microservices!

Learn more about serverless containers and service meshes during the next webinar

I’ll host:

“Taking your Python microservices to the next level with a Service Mesh in AWS”.

Read more!

Amazon Web Services: Deliver High Quality Solutions Fast

Serverless CI/CD Pipelines with AWS Services, for Your DevOps Needs